The Interface Ecology Lab fosters integrative research projects spanning hardware, software, and theory, producing natural user interfaces, creativity support environments, games,

interaction techniques, visualization algorithms, semantics, programming languages, interactive installations, and evaluation methodologies.

research areas

People engaged in ideation tasks curate— they collect, assemble, write, sketch, and exhibit.

Free-form web curation is a spontaneous, improvisational,

and divergent creative process that involves choosing elements, sketching, and writing, and assembling them spatially.

Free-form web curation is non-linear and unrestricted, breaking out of lists and grids to support the synthesis and emergence of diverse ideas.

Free-form web curation is a spontaneous, improvisational,

and divergent creative process that involves choosing elements, sketching, and writing, and assembling them spatially.

Free-form web curation is non-linear and unrestricted, breaking out of lists and grids to support the synthesis and emergence of diverse ideas.

Body-based interaction:

integrate multi-modal sensing with interface design and gesture recognition.

We investigate body-based action for human expression in computing, addressing ideation and play.

Body-based interaction:

integrate multi-modal sensing with interface design and gesture recognition.

We investigate body-based action for human expression in computing, addressing ideation and play.

Live media places bring together various media forms to support participatory shared

experiences in online communities. We designing tools to help people create more expressive and engaging live experiences in contexts such

as online learning and games.

Live media places bring together various media forms to support participatory shared

experiences in online communities. We designing tools to help people create more expressive and engaging live experiences in contexts such

as online learning and games.

Games and Play are fun and creative forms of human experience.

We study emerging culture and media surrounding game play.

We investigate game design through play, culture, interface, and media perspectives.

Games and Play are fun and creative forms of human experience.

We study emerging culture and media surrounding game play.

We investigate game design through play, culture, interface, and media perspectives.

Web

semantics describe and derive significant attributes and relationships of digital content.

We design an innovative type system for deriving and presenting web semantics for diverse content —e.g., digital libraries, e-commerce, social media— to provide context to users engaged in

information-based ideation and curation.

Web

semantics describe and derive significant attributes and relationships of digital content.

We design an innovative type system for deriving and presenting web semantics for diverse content —e.g., digital libraries, e-commerce, social media— to provide context to users engaged in

information-based ideation and curation.

current projects

LiveMâché is our current

design curation probe.

This art-inspired web app provides live, collaborative capabilities for collecting and organizing content, along with writing, sketching, chat, and live streaming video.

LiveMâché is our current

design curation probe.

This art-inspired web app provides live, collaborative capabilities for collecting and organizing content, along with writing, sketching, chat, and live streaming video.

prior projects

The Art.CHI installation provides a movement-based, spatial and visual interface for navigating a multi-scale information composition, which holistically represents an online art gallery.

The Art.CHI installation provides a movement-based, spatial and visual interface for navigating a multi-scale information composition, which holistically represents an online art gallery.

IdeaMâché

is our previous web app that uses

free-form web curation as a medium of expression.

Curation is the process of creating and assembling information in meaningful exhibits, for people to think about.

IdeaMâché

is our previous web app that uses

free-form web curation as a medium of expression.

Curation is the process of creating and assembling information in meaningful exhibits, for people to think about.

TweetBubble

is a

Chrome extension

that helps Twitter users

engage in exploratory browsing, by following associational chains of

tweets through #hashtags and @users.

Our study show this increases the variety

of content people explore,which helps you develop multiple perspectives on a topic.

TweetBubble makes browsing a more fun and fluid experience.

TweetBubble

is a

Chrome extension

that helps Twitter users

engage in exploratory browsing, by following associational chains of

tweets through #hashtags and @users.

Our study show this increases the variety

of content people explore,which helps you develop multiple perspectives on a topic.

TweetBubble makes browsing a more fun and fluid experience.

Cross-surface interaction

addresses moving information objects across multi-display environments that support sensory interaction modalities such as touch, pen, and free-air.

It is characterized by user experiences of continuously manipulating information across interactive surfaces, providing a sense of connection.

Cross-surface interaction

addresses moving information objects across multi-display environments that support sensory interaction modalities such as touch, pen, and free-air.

It is characterized by user experiences of continuously manipulating information across interactive surfaces, providing a sense of connection.

Hurricane Recovery: Collecting Locative Media to Rebuild Local Knowledge

We engaged in iterative, participatory design, reaching out to Hurricane Katrina evacuee communities.

We gathering information about needs and desires, building situated semantics and a locative media collection sensemaking system.

Digital photographs were connected with GPS sensor data, semantics, a zoomable map interface, and an image clustering algorithm.

Hurricane Recovery: Collecting Locative Media to Rebuild Local Knowledge

We engaged in iterative, participatory design, reaching out to Hurricane Katrina evacuee communities.

We gathering information about needs and desires, building situated semantics and a locative media collection sensemaking system.

Digital photographs were connected with GPS sensor data, semantics, a zoomable map interface, and an image clustering algorithm.

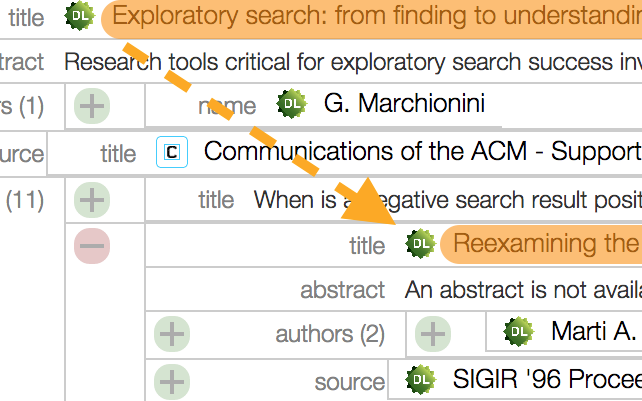

BigSemantics is an open

source software architecture and language for developing applications that present

interactive web semantics. Developers author wrappers to create semantic types, while inheriting from existing types.

The wrapper repository provides types for common web resources.

BigSemantics includes the Metadata In-Context Expander (MICE), an example HTML5 component for exploring linked web semantics in one context.

BigSemantics is an open

source software architecture and language for developing applications that present

interactive web semantics. Developers author wrappers to create semantic types, while inheriting from existing types.

The wrapper repository provides types for common web resources.

BigSemantics includes the Metadata In-Context Expander (MICE), an example HTML5 component for exploring linked web semantics in one context.

Test Collection

aggregated a set of documents, a clearly formed problem that an algorithm is supposed to provide solutions to, and the answers that the algorithm should produce when executed on the documents.

The Test Collection Digital Library System enables collecting and labeling documents, and publishing.

Test Collection

aggregated a set of documents, a clearly formed problem that an algorithm is supposed to provide solutions to, and the answers that the algorithm should produce when executed on the documents.

The Test Collection Digital Library System enables collecting and labeling documents, and publishing.